Materialized lake views (MLVs) in Microsoft Fabric are an effective way to implement medallion architecture declaratively, but once you have a pipeline of MLVs in production, you need visibility into whether they’re current. Fabric’s MLV management area gives you a visual lineage and refresh history, but if you want to build automated alerting, logging, or custom tooling, you need to get that information programmatically. This post walks through one way to do that, using a small demo lakehouse built entirely in a Fabric notebook.

Getting lineage from table properties

When you create a materialized lake view, Fabric automatically populates a table property called fabric.source.entities that describes every immediate upstream source. You can retrieve it with SHOW TBLPROPERTIES and parse the JSON payload:

props = spark.sql(f"SHOW TBLPROPERTIES dbo.mlvc").collect()

source_entities_raw = next(

(row["value"] for row in props if row["key"] == "fabric.source.entities"),

None

)

sources = [e["tableName"] for e in json.loads(source_entities_raw)]The payload includes workspace, artifact, and schema context for each source, which means it can support cross-lakehouse lineage scenarios as that capability matures. Combining this across all MLVs in a schema, using SHOW MATERIALIZED LAKE VIEWS IN dbo, gives you the full dependency graph without any hardcoding.

Getting last modified time from source tables

If you have Change Data Feed enabled on your base Delta tables (which you should for optimal MLV refresh anyway) you can retrieve the timestamp of the last committed operation from the Delta transaction log:

history = spark.sql(f"DESCRIBE HISTORY dbo.table1 LIMIT 1").collect()

last_modified = history[0]["timestamp"]DESCRIBE HISTORY returns the full transaction log in reverse chronological order. LIMIT 1 gives you the most recent entry, which corresponds to the last time data was written to the table. This is more reliable than file system metadata because it reflects committed Delta transactions, not just file touches.

Getting last refresh time from an MLV

MLVs are persisted as Delta tables under the hood, so the same DESCRIBE HISTORY approach works for them too:

history = spark.sql(f"DESCRIBE HISTORY dbo.mlva LIMIT 1").collect()

last_refresh = history[0]["timestamp"]This gives you the timestamp of the last time the MLV was successfully refreshed, whether that was triggered by a schedule, a notebook, or manually from the portal.

Detecting staleness

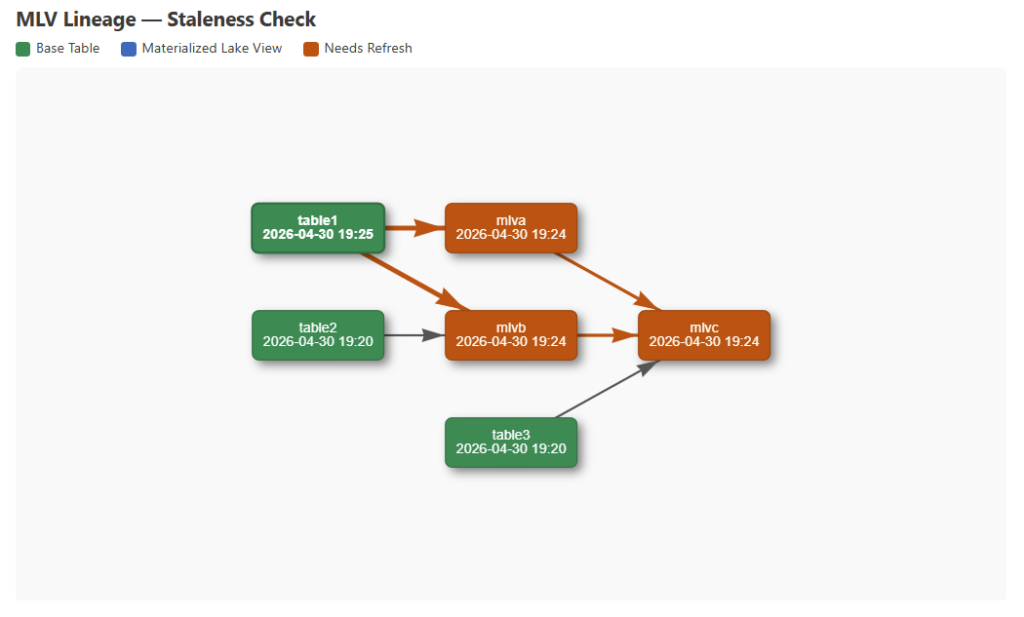

With timestamps for both sources and MLVs in hand, you can compare them to determine whether any MLV is out of date. An MLV is stale if any of its upstream sources have a newer timestamp than the MLV itself. Staleness also propagates downstream — if mlvb is stale, then mlvc which depends on mlvb is also stale regardless of its own source timestamps. From there you can write results to a Delta table for historical tracking, feed them into an alerting pipeline, or visualize them inline in the notebook.

Visualizing dependencies in the notebook

Once you have the lineage graph and timestamps as Python objects, you can render an interactive dependency diagram directly in a Fabric notebook cell using displayHTML. Rather than building the diagram from scratch, I used vis.js, a JavaScript network visualization library that loads directly from a public URL at render time with no package dependencies to manage.

vis.js handles node layout, edge routing, and interactivity out of the box, which makes it a practical choice for notebook-based visualization. The diagram is built by serializing the Python lineage dict and timestamp dict to JSON, injecting them into an HTML string, and passing that to displayHTML. vis.js renders a hierarchical left-to-right layout that mirrors data flow direction, with each node labeled with the object name and its last modified or last refresh timestamp. Nodes are color-coded by type — green for base Delta tables, blue for MLVs — and when staleness detection is enabled, any node that needs a refresh turns orange, as do the edges feeding into it. The resulting diagram is interactive — nodes can be dragged to reposition them, the canvas can be scrolled to zoom, and hovering over a node highlights it and its connected edges, which is useful when tracing dependencies in a more complex pipeline.

One thing worth noting: I attempted to use matplotlib first, which is natively available in Fabric notebooks, but I ran into limitations with arrow routing that made the diagram hard to read when nodes were at different vertical positions. vis.js solved that cleanly without any additional setup, as long as the CDN is accessible from your Fabric capacity.

Walkthrough

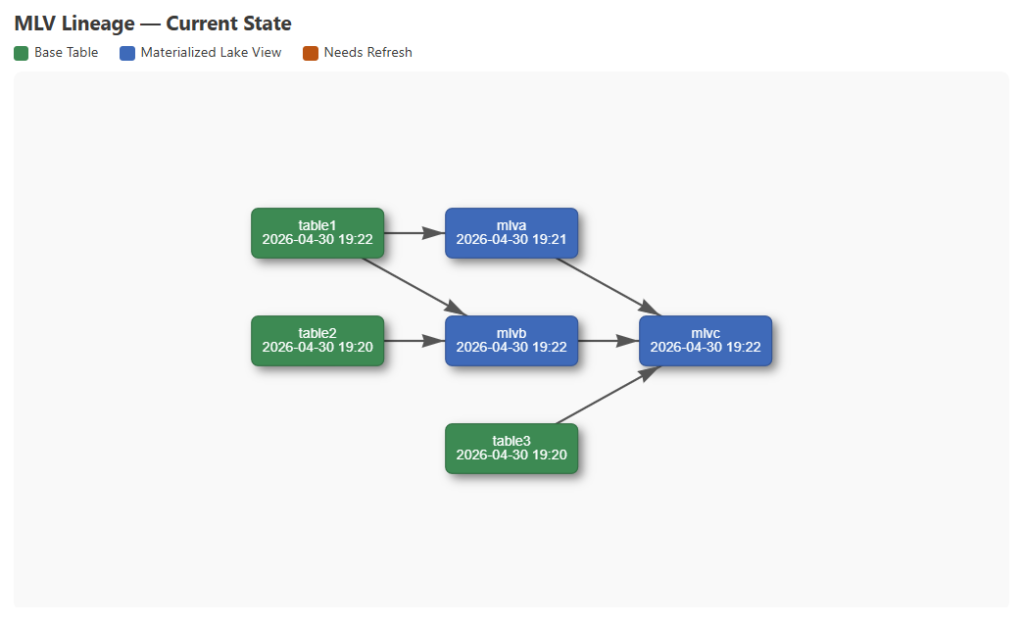

To demonstrate this end to end, my notebook sets up three base Delta tables and three MLVs with the following dependencies:

- mlva is sourced from table1

- mlvb is sourced from table1 and table2

- mlvc is sourced from mlva, mlvb, and table3

Change Data Feed is enabled on all three base tables so the Delta transaction log captures each write, and CDF is set on the MLVs as well so incremental refresh is available. After setup, the notebook refreshes all three MLVs in dependency order — mlva and mlvb first, then mlvc — to get a clean baseline. You could also refresh them from the Materialized lake views tab on the Lakehouse item in the Fabric portal.

With everything refreshed, parsing fabric.source.entities and DESCRIBE HISTORY across all objects gives us the full lineage graph and a timestamp for every node. At this point nothing is stale, and the baseline diagram reflects that:

Next, three new rows are inserted into table1. That single write makes table1 newer than mlva, mlvb, and mlvc — all three depend on table1 either directly or through a dependency. Re-fetching timestamps and running the staleness check propagates that through the graph, and the updated diagram highlights all affected nodes:

The Fabric portal already gives you a visual lineage and refresh history for MLVs, but doing this programmatically means you can build on top of it — logging refresh state over time, triggering alerts when MLVs go stale, or embedding a live diagram in an operational notebook. The full notebook is available in my GitHub repo.

What would you do with programmatic access to MLV lineage and refresh state? Let me know in the comments.

The post Programmatically Retrieving MLV Lineage and Refresh Times first appeared on Data Savvy.

There is currently no way to set a default value in a Power BI slicer visual. If you create a report with a slicer for month and choose the current month (e.g. April 2026), save the report, and then come back to the report a month later, your original selection will be enforced and the data will now show the prior month. So how do you make reports with slicers show data for the current month by default while allowing users to select other months as needed? This video shows 3 options. My current personal favorite is the button slicer solution I show as the third option.

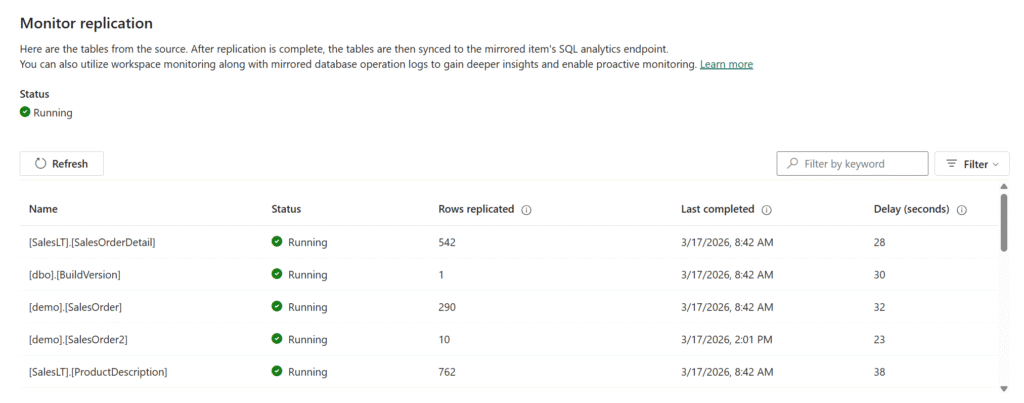

Monitoring in the Fabric Portal

The item page for the mirrored database in the Fabric portal shows replication status for the database overall as well as for each table. The per-table status includes:- rows replicated: the cumulative count of replicated rows, including all inserts, updates, and deletes applied to the target table

- last completed: the last time the mirrored table was refreshed from the source

- delay (seconds): the time between when a change was committed at the source and when it was successfully applied to the destination

Monitoring in the Database

When you configure Fabric mirroring, it enables the change feed on the database and the tables you have selected to mirror. Once everything is configured, there are some system views and stored procedures you can access to see what’s going on.sys.dm_change_feed_log_scan_sessions

This DMV is your primary window into change feed health and activity. It returns one row per log scan session, plus an aggregate row wheresession_id = 0 that summarizes all sessions since the instance last started. The aggregate row is useful for understanding overall health since the instance last started; the individual session rows show you what’s happening in discrete scans.

You want to see sessions completing without errors, with tran_count incrementing and currently_processed_lsn or currently_processed_commit_lsn advancing over time. If sessions are stalling at the same batch_processing_phase repeatedly, or error_count is climbing, something needs attention.

The schema_change_count column tracks DDL-related log records processed in a session. Note that this is not a 1:1 mapping with the number of DDL operations — some operations, like adding, altering, or dropping a column, generate two log records per operation, so the count may be higher than you expect.

sys.dm_change_feed_errors

Whenerror_count in the sessions DMV is non-zero, this is where you find out what went wrong. Some errors are transient and will resolve on their own; others won’t. Repeated errors of the same type are a signal to investigate rather than wait it out.

sys.sp_help_change_feed

This stored procedure gives you a configuration-level view of which tables are enrolled in mirroring and their current state. It’s useful after initial setup to confirm everything was picked up correctly, and when a table stops replicating and you want to verify it’s still enrolled.sys.databases

Unlike CDC, the change feed doesn’t write to change tables in the source database, so it won’t directly cause the transaction log to grow. However, it does hold log truncation until changes are successfully replicated to Fabric. You can useis_data_lake_replication_enabled = 1 to filter sys.databases to only the databases where Fabric mirroring is enabled. A value of REPLICATION for log_reuse_wait_desc means mirroring is currently holding log truncation — not necessarily something that needs immediate action, but if log usage is also growing, that’s a signal that mirroring may not be keeping pace and warrants investigation. If the log hits its size limit, writes to the database will fail.

Extended Events

Microsoft also provides an extended events session you can use for deeper troubleshooting. The session captures change feed activity including errors, snapshots, performance, and scheduler events. Because it can be verbose, Microsoft recommends only running it when you’re actively troubleshooting a problem rather than leaving it on all the time. You can find the session definition in the Troubleshoot Fabric Mirrored Databases from SQL Server documentation.Files in OneLake

You can use Azure Storage Explorer to browse the files for your Fabric mirrored database. The Monitoring folder contains two files:- tables.json shows each mirrored table along with its status, any error message, and metrics.

- replicator.json shows the overall database replication status along with any error message.

Manifest_1.json file in the LandingZone folder is a newline-delimited JSON log that records everything the change feed has published to OneLake — initial snapshots, incremental change batches, DDL events, and table creation notifications. It’s not something you’d monitor routinely, but it’s useful for troubleshooting — for example, to confirm that a snapshot completed and how many rows were captured, to see whether incremental batches are being written, or to trace when a DDL change occurred and verify that a re-snapshot was triggered as a result.

Within each table’s subfolder you’ll find a TableSchema.json file with the table’s schema definition, a FullCopyData folder where the initial snapshot parquet files are written, and a ChangeData folder where incremental CSV change files land after the snapshot completes. If you’re troubleshooting a data discrepancy or want to confirm that specific changes have made it to OneLake, you can browse these folders directly to check whether the expected files are present.

Knowing Where to Look

For day-to-day health checks, the portal monitoring page andsys.dm_change_feed_log_scan_sessions will cover most of what you need. If you see errors or stalled sessions, sys.dm_change_feed_errors and sys.sp_help_change_feed are the next stop. The OneLake files and extended events are there when you need to dig deeper into a specific problem. Mirroring continues to get new features and changes, so keep an eye on the Microsoft Fabric Mirrored Databases from SQL Server documentation for updates.

The post Monitoring Fabric Mirroring for SQL 2025 first appeared on Data Savvy.Mirroring of SQL Server databases in Microsoft Fabric was first released in public preview in March 2024. Mirrored databases promise near-real-time replication without the need to manage and orchestrate pipelines, copy jobs, or notebooks. John Sterrett blogged about them last year here. But since that initial release, the mechanism under the hood has evolved significantly. Let's talk about Fabric Mirroring: Change Feed vs CDC Explained.

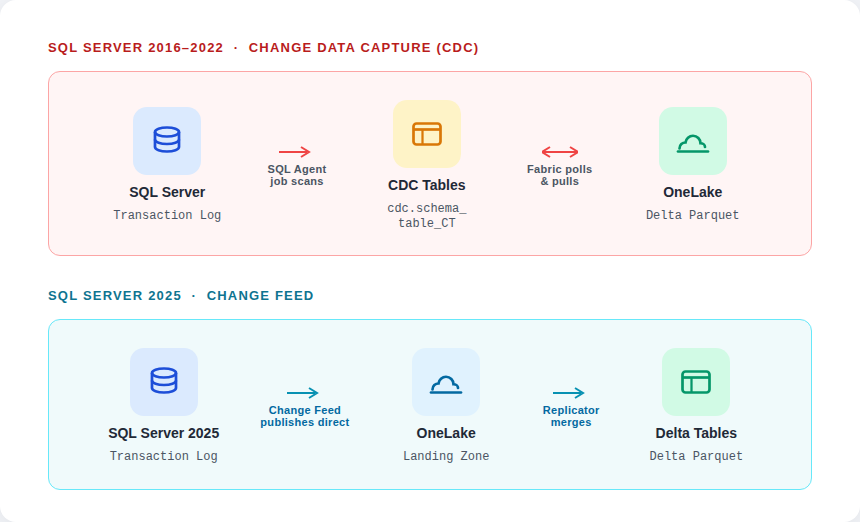

How Fabric Mirroring Works in SQL Server 2016–2022 (CDC)

When mirroring was first released for Azure SQL Database, it used Change Data Capture (CDC). That is still what is used to mirror SQL Server 2016 – 2022.

CDC works by asynchronously scanning the transaction log to find changes related to tracked tables, then writing those changes to dedicated change tables — one per tracked source table, in the format cdc.schema_tablename_CT. A SQL Server Agent job (sys.sp_cdc_scan) is responsible for that log scan and write. Fabric’s replication layer then polls those change tables and pulls changes into OneLake, where they’re converted to Delta Parquet format. The result is a two-hop process: log → change table → OneLake. That intermediary write step is where the overhead lives. If you have a busy SQL Server, this overhead may not be acceptable.

Setting up CDC for SQL Server 2016–2022 requires the fabric_login principal to be a member of the sysadmin server role, at least temporarily, and any future CDC maintenance also requires sysadmin membership. For security-conscious organizations, that’s a significant ask. You can drop the login from sysadmin after CDC is configured, but having to elevate it in the first place causes friction.

In SQL Server 2016–2022, if a table’s schema changes after CDC is enabled, the mirrored table schema no longer matches the source, and mirroring fails. Getting replication back on track requires manually disabling and re-enabling CDC on the affected tables.

SQL Server 2025 Fabric Mirroring: The Change Feed Explained

SQL Server 2025 has a much better solution: the change feed. Rather than routing changes through change tables, the change feed scans the transaction log at a high frequency and publishes committed changes directly to a landing zone in OneLake. Fabric’s replicator engine then merges those files into the target Delta tables. The intermediary write step is gone.

Because the change feed doesn’t write data back into the source database, it carries lower overhead than CDC. There are no change tables to maintain, no SQL Server Agent jobs to keep healthy, and no cleanup jobs running in the background purging old change records. For busy OLTP systems where CDC’s performance overhead was a concern, this is a meaningful improvement.

DDL changes are handled better, too. Rather than failing when a schema change is detected, the change feed triggers a full re-snapshot of the affected table and reseeds the data automatically. That re-snapshot has a cost if the table is large, but it’s self-healing. You won’t come in Monday morning to find mirroring has been broken since Friday’s deployment.

The permissions model is also cleaner. Rather than requiring sysadmin elevation to configure CDC, SQL Server 2025 mirroring uses a system-assigned managed identity to handle outbound authentication to Fabric. You still create a dedicated login with minimal permissions on the source database, but sysadmin is never required.

How to Plan Your Fabric Mirroring Migration: CDC vs Change Feed

CDC-based mirroring is still what SQL Server 2016–2022 uses, and it works. Just go in with eyes open about the performance overhead, the sysadmin requirement, and the DDL limitations.

If you’re already on SQL Server 2025 or planning to upgrade, the change feed makes mirroring a more attractive option than it was under CDC — particularly for busy OLTP systems where the overhead of change table writes was a concern.

Before you enable mirroring on SQL Server 2025, there are a few constraints worth knowing. The source database must be set to the full recovery model (simple recovery is not supported). The change feed is also mutually exclusive with CDC: if CDC is already enabled on a database, you cannot enable Fabric mirroring on that same database. If you’re running CDC today for other consumers, you have a decision to make. You’ll need to decide whether to remove CDC and consolidate on the change feed or keep CDC and find another path for getting that data into Fabric.

Note: As of March 11, 2026, SQL Server 2025 mirroring is supported for on-premises instances only. It is not supported for SQL Server 2025 running in an Azure Virtual Machine or on Linux. It also requires the instance to be connected to Azure Arc with the Azure Extension for SQL Server installed.

Mirroring is still evolving, so it’s worth keeping an eye on the Fabric Mirroring roadmap. And of course, stay tuned for announcements from FABCON next week!

The post How Fabric Mirroring Transformed with SQL Server 2025 first appeared on Data Savvy.

Let me show you some data masking limitations in SQL Server when used for security, and what you should be doing instead (or alongside it) if you’re serious about Zero Trust data security.

TL;DR SQL Server Dynamic Data Masking is a presentation layer feature, not a security feature.

SQL Server Dynamic Data Masking Security?

Dynamic Data Masking intercepts query results at the engine level and replaces sensitive column values with masked versions for users who lack the UNMASK permission. The actual data in the database is unchanged — DDM is purely a presentation-layer filter. Again, DDM is a presentation-layer filer.

Security Pitfall 1: Inferring Masked Values with WHERE Clause Attacks

This is the big one. SQL Server Dynamic Data Masking masks the output of a query. It does nothing to prevent a user from using the masked column in a WHERE clause.

Here’s what that looks like in practice:

-- The user can't see the salary column directly (it's masked)

SELECT EmployeeID, Salary FROM HR.Employees;

-- Returns: 1, xxxx

-- But they CAN do this:

SELECT EmployeeID FROM HR.Employees WHERE Salary BETWEEN 95000 AND 105000;

-- Returns rows — meaning they just learned who earns in that range

By running enough range queries, a patient attacker can binary-search their way to the exact value of any masked numeric column. For salary data, SSNs, account numbers, or any structured numeric field, this completely defeats the purpose of masking the data.

Microsoft’s own documentation acknowledges this. It’s not a bug — it’s a documented architectural constraint. DDM was never designed to stop a determined insider.

SQL Server Dynamic Data Masking Security – SSN Demo

CREATE DATABASE [demo]

GO

USE [demo]

go

CREATE TABLE dbo.Person (

PersonID int NOT NULL

CONSTRAINT PK_dboPerson

PRIMARY KEY CLUSTERED identity

,FirstName varchar(50) NULL

,LastName varchar(50) NULL

,SSN varchar(11) NULL

)

GO

INSERT INTO dbo.Person (FirstName, LastName, SSN)

VALUES

('Patricia', 'Smith', '760-36-4013'),

('Linda', 'Jones', '755-14-8936'),

('John', 'Lee', '433-05-0489'),

('John', 'Miller', '239-65-9864'),

('James', 'Martin', '204-92-8929'),

('Jessica', 'Davis', '460-76-4558'),

('James', 'Garcia', '715-55-5575'),

('William', 'Jones', '221-98-5515'),

('Patricia', 'Williams', '390-13-5882'),

('Susan', 'Perez', '271-06-7528'),

('Karen', 'Brown', '388-11-9045'),

('Barbara', 'Perez', '883-47-9460'),

('Michael', 'Williams', '047-85-3734'),

('Barbara', 'Williams', '876-30-1655'),

('Richard', 'Martinez', '465-82-5978'),

('Jennifer', 'Wilson', '364-27-4375'),

('John', 'Perez', '651-22-8752'),

('Linda', 'Garcia', '474-49-4423'),

('Karen', 'Davis', '702-42-0917'),

('Linda', 'Johnson', '825-41-6573');

GO

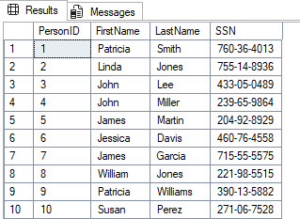

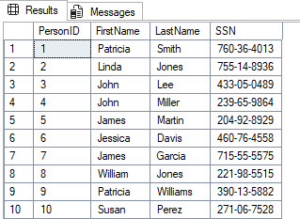

-- Nothing is masked, can see SSN's

select * from dbo.Person

Fictitious SSN data that will be exposed through SQL Server Dynamic Data Masking

/* Mask SSN's */

ALTER TABLE dbo.Person

ALTER COLUMN SSN

ADD MASKED WITH (FUNCTION = 'default()')

GO

/* Create testing user */

CREATE USER [MaskedUser] WITHOUT LOGIN WITH DEFAULT_SCHEMA=[dbo]

GO

GRANT SELECT ON dbo.Person TO [MaskedUser];

GO

EXECUTE AS USER = 'MaskedUser';

GO

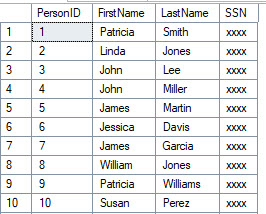

SELECT * FROM dbo.Person

GO

SQL Server Dynamic Data Masking at work. SSNs are masked for this user.

EXECUTE AS USER = 'MaskedUser';

GO

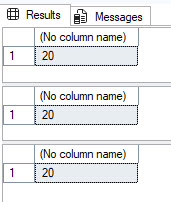

SELECT COUNT(*) FROM dbo.Person

SELECT COUNT(*) FROM dbo.Person WHERE CHARINDEX('-', SSN) = 4

SELECT COUNT(*) FROM dbo.Person WHERE CHARINDEX('-', SSN, 5) = 7

REVERT;

SQL Server Dynamic Data Masking doesn’t mask predicates, just the output results

SET NOCOUNT ON

GO

EXECUTE AS USER = 'MaskedUser';

GO

DECLARE @SSN1 TABLE (

SSN1 char(3) PRIMARY KEY CLUSTERED

);

DECLARE

@SSN1Loop1 int = 0

,@SSN1Loop2 int = 0

,@SSN1Loop3 int = 0

WHILE @SSN1Loop1 < 10

BEGIN

SELECT @SSN1Loop2 = 0

WHILE @SSN1Loop2 < 10

BEGIN

SELECT @SSN1Loop3 = 0

WHILE @SSN1Loop3 < 10

BEGIN

INSERT INTO @SSN1 (SSN1)

SELECT CONVERT(char(1),@SSN1Loop1)

+ CONVERT(char(1),@SSN1Loop2)

+ CONVERT(char(1),@SSN1Loop3)

SELECT @SSN1Loop3 += 1

END

SELECT @SSN1Loop2 += 1

END

SELECT @SSN1Loop1 += 1

END

--SELECT * FROM @SSN1

DECLARE @SSN2 TABLE (

SSN2 char(2) PRIMARY KEY CLUSTERED

)

DECLARE

@SSN2Loop1 int = 0

,@SSN2Loop2 int = 0

WHILE @SSN2Loop1 < 10

BEGIN

SELECT @SSN2Loop2 = 0

WHILE @SSN2Loop2 < 10

BEGIN

INSERT INTO @SSN2 (SSN2)

SELECT CONVERT(char(1),@SSN2Loop1)

+ CONVERT(char(1),@SSN2Loop2)

SELECT @SSN2Loop2 += 1

END

SELECT @SSN2Loop1 += 1

END

--SELECT * FROM @SSN2

DECLARE @SSN3 TABLE (

SSN3 char(4) PRIMARY KEY CLUSTERED

)

DECLARE

@SSN3Loop1 int = 0

,@SSN3Loop2 int = 0

,@SSN3Loop3 int = 0

,@SSN3Loop4 int = 0

WHILE @SSN3Loop1 < 10

BEGIN

SELECT @SSN3Loop2 = 0

WHILE @SSN3Loop2 < 10

BEGIN

SELECT @SSN3Loop3 = 0

WHILE @SSN3Loop3 < 10

BEGIN

SELECT @SSN3Loop4 = 0

WHILE @SSN3Loop4 < 10

BEGIN

INSERT INTO @SSN3 (SSN3)

SELECT CONVERT(char(1),@SSN3Loop1)

+ CONVERT(char(1),@SSN3Loop2)

+ CONVERT(char(1),@SSN3Loop3)

+ CONVERT(char(1),@SSN3Loop4)

SELECT @SSN3Loop4 += 1

END

SELECT @SSN3Loop3 += 1

END

SELECT @SSN3Loop2 += 1

END

SELECT @SSN3Loop1 += 1

END

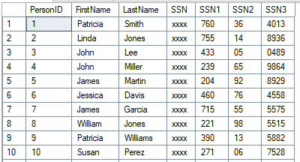

SELECT

P.PersonID

,P.FirstName

,P.LastName

,P.SSN

,T1.SSN1

,T2.SSN2

,T3.SSN3

FROM dbo.Person P

LEFT JOIN @SSN1 T1

ON SUBSTRING(P.SSN,1,3) = T1.SSN1

LEFT JOIN @SSN2 T2

ON SUBSTRING(P.SSN,5,2) = T2.SSN2

LEFT JOIN @SSN3 T3

ON SUBSTRING(P.SSN,8,4) = T3.SSN3

ORDER BY P.PersonID;

GO

REVERT

GO

SQL Server Dynamic Data Masking predicate bypass attack showing SSN exposure

What to do instead: If users shouldn’t be able to filter on a column, they shouldn’t have SELECT on that table at all. Row-Level Security (RLS) combined with column-level encryption or Always Encrypted is the right tool here, not Dynamic Data Masking.

Security Pitfall 2: Anyone with ALTER TABLE Can Remove the Mask Entirely

Okay, let’s assume pitfall #1 didn’t stop you in your tracks from wanting to use SQL Server Dynamic Data Masking for security purposes outside the presentation layer (it should have).

Here’s one that gets overlooked in permission audits. Any user with ALTER TABLE or ALTER ANY MASK permission can simply drop it like it’s hot, the masking function from a column:

-- Requires ALTER TABLE permission (not UNMASK):

ALTER TABLE HR.Employees

ALTER COLUMN Salary DROP MASKED;

After that, everyone can read the data — no UNMASK needed. The mask is gone permanently until someone re-adds it. So users, could drop it, see what they need to see, and add it back.

Security Pitfall 3: Metadata Is Fully Visible to Every User

Even a user with only VIEW ANY DEFINITION permissions can see exactly which columns are masked. This where Young Joc would say, “I know you see…”

SELECT

OBJECT_NAME(object_id) AS TableName,

name AS ColumnName,

masking_function

FROM sys.masked_columns;

Security Pitfall 4: DDM Doesn’t Survive Data Movement

This is the one that kills compliance reviews. SQL Server Dynamic Data Masking is defined at the column level in the source table. If Cardi B was a DBA she would say, “Make the Data Move.”

The moment that data moves anywhere else, the mask disappears:

- SELECT INTO / INSERT SELECT: The mask function is not copied to the destination table.

- OPENROWSET / linked server queries: DDM is not enforced on remote result sets.

- BCP / bulk export: Exports the unmasked data if run by a privileged account.

- SSRS, Power BI, or any reporting tool: If the service account has UNMASK (or DDM isn’t configured in the cloud copy), the reports show real data.

- Database backups: Backups contain the actual unmasked data. If the backup is restored by someone with elevated permissions, DDM means nothing.

Teams that use DDM to “protect” data flowing into a staging database or a data warehouse are providing exactly zero protection for that downstream data.

Here is an example of seeing that SELECT INTO doesn’t mask data in the destination table.

EXECUTE AS USER = 'MaskedUser';

GO

SELECT *

FROM dbo.Person2

GO

REVERT

GO

Fictitious SSN data that will be exposed through SQL Server Dynamic Data Masking

What to do instead: Treat data movement as a security event. Apply encryption for data that needs to stay protected during movement. DDM belongs only in the presentation layer, not as a data-pipeline security control.

Security Pitfall 5: The UNMASK Permission A Security Vulnerability Sledgehammer

-- Granting this one permission unlocks everything:

GRANT UNMASK TO [DevUser];

This isn’t a theoretical risk. Dev teams routinely need production access for troubleshooting. If your escalation playbook involves handing out UNMASK, you’ve just handed them a master key.

What to do instead: Upgrade your permission model. If you’re on SQL Server 2022 or Azure SQL, use column-level UNMASK grants. If you’re on older versions, use static data masking with sanitized data for dev access rather than granting UNMASK on production. Again, I wouldn’t use Dynamic Data Masking for security purposes.

So Should You Use DDM at All?

Yes,but in the right context. DDM is legitimately useful for:

- Reducing casual exposure in reporting environments where users cannot probe the data.

- Limiting blast radius if a low-privilege account is compromised (they see masked output, not raw PII)

- Satisfying compliance documentation requirements as only one layer in a defense-in-depth stack.

The problem is treating Dynamic Data masking as your primary security control. In a Zero Trust model, every layer needs to hold up under adversarial conditions. DDM does not by itself. It’s a trip wire, not a vault.

Your actual Zero Trust data security stack in SQL Server should look like this:

| Layer | Tool |

|---|---|

| Authentication | Active Directory Domain Services / Entra ID + MFA |

| Column-level protection | Always Encrypted or Column Level Encryption. |

| Row-level access | Row-Level Security (RLS) |

| Presentation-layer masking | Dynamic Data Masking (DDM) |

| Audit and detection | SQL Server Audit + Microsoft Defender for SQL |

DDM goes in the “presentation layer” bucket. The moment you understand that distinction, you’ll stop over-relying on it.

FAQ: Dynamic Data Masking in SQL Server

Here are some common questions that come up with SQL Server Dynamic Data Masking:

Does SQL Server Dynamic Data Masking protect against SQL injection?

No, Dynamic Data Masking in SQL Server provides zero protection against SQL injection attacks. SQL injection exploits vulnerabilities in how an application constructs queries, allowing an attacker to execute arbitrary SQL against your database.

Is Dynamic Data Masking HIPAA compliant?

Dynamic Data Masking in SQL Server alone is not sufficient for HIPAA compliance, but it can be one contributing layer in a compliant architecture.

What is the difference between DDM and Always Encrypted?

These two features operate at completely different layers of the stack and solve fundamentally different problems. At a high level, Dynamic Data Masking works at the presentation layer, and Always Encrypted encrypts the data.

The Takeaway

Dynamic Data Masking is a convenience feature with security side effects. Dynamic Data Masking is not a security feature with convenience side effects. If you’re using it as your main line of defense against unauthorized data access, you’re one curious person or one clever WHERE clause away from an data exposure incident.

John Sterrett is a Principal at ProcureSQL, a Microsoft Data Platform consultancy specializing in SQL Server performance, security, and Azure migrations. Follow him on YouTube and Instagram for weekly SQL Server content.

Questions or war stories about DDM? Drop them in the comments.

This is the last post in a three-part series exploring the mechanics of Materialized Lake Views (MLVs). The goal is to help you understand how they work and whether they make sense for your environment. What they are, when they help, and when they fall short.

This is the second post in a three-part series exploring the mechanics of Materialized Lake Views. The goal is to help you understand how they work and whether they make sense for your environment. What they are, when they help, and when they fall short.

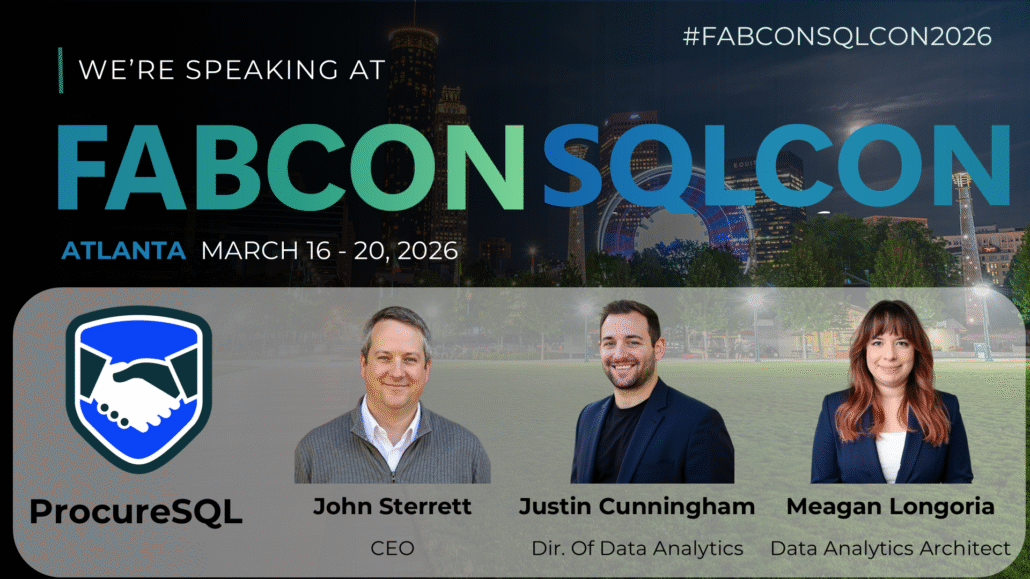

We are happy to announce that three ProcureSQL employees are on the FABCON/SQLCON agenda. These co-located conferences are happening in Atlanta, Georgia March 16 – 20.

FABCON is the largest conference dedicated to Microsoft Fabric, bringing together tech enthusiasts, innovators, and industry leaders to explore the future of data, analytics, business intelligence, and AI integration.

SQLCON stands out as a premier conference within FABCON, featuring sessions and workshops that take you on a dive deep into SQL Server, Azure SQL, SQL in Fabric, SQL Tools, AI Apps with SQL, migration & modernization, optimization & performance, database security, and much more.

Justin Cunningham and Meagan Longoria are presenting Mirroring for SQL Server in Fabric: Inside the Replication Process. This session examines how Mirroring for SQL Server in Fabric really works: what happens on the source database, how data lands in OneLake, data retention policies, monitoring and logging, configuration of replicated objects, and which scenarios require restarting replication.

Justin is also presenting Materialized Lake Views: Simplifying Your Medallion ETL. Materialized lake views turn Spark SQL into auto-orchestrated smart tables in your Fabric lakehouse. This session covers how to replace fragile ETL with declarative medallion layers, understand refresh and lineage, and decide when materialized lake views beat notebooks, jobs, or warehouses.

John Sterrett is presenting Modern Data Protection Strategies with SQL Server. Today, we handle increasing volumes of sensitive data. DBAs and Data Engineers must protect their data from external attackers, employees, and privileged admins like themselves. This session will teach you how to protect your data while maintaining functionality and performance. The session focuses on using column-level encryption, row-level security, and auditing to secure your data.

We are looking forward to speaking and hope to see you in our sessions.

Most SQL Server 2025 reviews focus on features. Here’s what your CFO actually needs to know about the upgrade cost.

The issue I’ve found is that reviews of SQL Server 2025 Upgrade Costs or SQL Server 2025 Upgrade ROI is that showcase the business impact of the upgrade are hard to find and don’t focus solely on its technical aspects. SQL Server 2025 was released in November of 2025. Developers and Data Engineers are thrilled with the new features and upgrades it offers, and you can find plenty of their reviews on YouTube and in their blogs. But if you’re the owner of a company or need your CFO to approve the purchase of this upgrade, you need to know exactly what it will do for your bottom line and your customers.

As the CEO of ProcureSQL, I am responsible for ensuring our clients achieve the highest return on their SQL Server investments. Today, I’m here to speak with decision-makers who are wondering whether SQL Server 2025 is worth the financial investment.

- Standard Edition savings up to $179K on 32-core deployments

- Security upgrades via Entra ID can help avoid the $4.44M average breach cost

- Web Edition discontinued — migration planning required

- Express Edition database limit jumps from 10 GB to 50 GB

- Fabric Mirroring eliminates custom replication pipeline costs

SQL Server 2025 Edition and Licensing Changes

Microsoft has restructured its edition model with SQL Server 2025, delivering significant improvements for organizations that do not plan to upgrade to Enterprise Edition. Here is a look at the SQL Server 2025 Standard vs. Enterprise Edition changes that benefit companies planning to use Standard Edition.

SQL Server 2025 Standard Edition Changes

The Standard edition increased the number of CPU cores from 24 in 2022 to 32 in 2025. With SQL Server 2022, list pricing is $15,123 per 2-core pack for Enterprise Edition and $3,945 per 2-core pack for Standard Edition. With official 2025 pricing at the same rate for Standard Edition and Enterprise Edition, organizations previously forced into Enterprise Edition can potentially save up to $179,000 on a 32-core deployment.

| SQL Server Edition | Cost Per Core | Cores | List Cost |

|---|---|---|---|

| SQL Server 2025 Enterprise | $7,562 | 32 | $241,984 |

| SQL Server 2025 Standard | $1,973 | 32 | $63,136 |

|

SQL Server 2025 Upgrade Cost Estimated Savings

|

$178,848

|

The memory limitation was also increased from 128GB of RAM in SQL Server 2022 to 256GB of RAM in SQL Server 2025. While there has never been a cap on database size in Standard Edition, the number of data pages that can stay in memory has doubled. The increased limitations on CPU and Memory alone should allow your team to process and analyze data faster if you are using the Standard edition of SQL Server and are upgrading to SQL Server 2025.

SQL Server 2025 Standard Edition picks up full Resource Governor support, including the new TempDB governance capabilities. You can define resource pools and workload groups to route sessions and limit or reserve CPU and Memory grants, and throttle I/O requests. Combined with the core and memory increases, Resource Governor enables workload consolidation on Standard Edition that previously required Enterprise Edition. The bottom line impact here is that you can prioritize applications based on resource workloads.

Starting with SQL Server 2025, Power BI Report Server is included with both Standard and Enterprise editions and no longer requires Software Assurance on those 2025 licenses. Previously, it required Enterprise core licenses with active Software Assurance. For organizations currently paying for Enterprise Edition + Software Assurance solely for using Power BI Report Server, this change can result in substantial cost savings.

SQL Server 2025 Express Edition Changes

The Express Edition of SQL Server has always been free, but the 2022 version limits users to 10 GB per database. It was good for applications with very low consumption and computing requirements. SQL Server 2025 Express Edition increases that limit fivefold, to 50 GB per database. While still limited, this is good for datasets that fit within the increased size constraint and for scenarios where a company might not want to pay for the standard version yet.

SQL Server 2025 Upgrade Roadblockers

There are two notable trade‑offs to be aware of before you look at the benefits. Web Edition is discontinued, so if you were using it, you will have to move to another edition when you upgrade. Organizations currently on Web Edition should be aware that there is no direct equivalent at the same price point. Standard Edition is substantially more expensive. In SQL Server 2025, Express Edition loses reporting rights entirely with the conversion of Reporting Services to Power BI Report Server.

An Important Developer Edition Change in SQL Server 2025

Microsoft also implemented an important solution for companies that do not plan to upgrade from Standard Edition to Enterprise Edition. Microsoft finally opened up the Developer Edition (free for anyone who wants to use it for non-production purposes) to let you choose between both Standard and Enterprise editions. This matters to you because previously, your development team might have used the Developer Edition in non-production and then used features that only worked in the Enterprise Edition in production. This required you to either spend more money by upgrading

from Standard Edition to Enterprise Edition or, worse, identify these errors and make quick, critical code changes after you deployed to production.

Now, if you are developing or testing an application, and you know the production workload will use Standard Edition, you can install Developer Standard Edition so the functionality is the same as what you would get with Standard Edition in Production. That way, you don’t have to worry about using features that either won’t work or have a different behavior than you would see from the Enterprise edition.

SQL Server 2025 Upgrade ROI: Security Enhancements That Save You Money

Every SQL login you retire lowers your breach exposure; even a single incident can easily cost more than your entire SQL Server upgrade. Moving to Entra ID and managed identities is cheaper than the cost of a serious breach. According to IBM’s 2025 Cost of a Data Breach Report, global averages are now at 4.44 million USD per incident and over 10.22 million USD in the U.S. That’s why it’s worth spending a moment on Entra ID Managed Identities.

SQL Server 2025 introduces native support for Microsoft Entra ID Managed Identities when you connect SQL Server to Azure with Azure Arc. Managed identities are arguably the biggest security advancement for on-prem SQL Servers in years. When you connect your SQL Server 2025 instance to Azure Arc, a system-assigned managed identity is automatically created for the host machine. You then associate that identity with the SQL Server instance.

The benefit is twofold. Managed Identities greatly improve both inbound and outbound authentication. External users and applications authenticate to SQL Server via Microsoft Entra ID. This can allow you to move from SQL authentication (the worst option for inbound authentication) to Entra ID with Multi-Factor Authentication (MFA), Single Sign-On (SSO), and conditional access. For outbound authentication, SQL Server authenticates to Azure Blob Storage, Azure Key Vault, and other Azure services without storing credentials in config files or connection strings.

“For most organizations, the security and productivity gains here are measured in millions of dollars of risk avoided and months of engineering time saved over the life of the platform.” – Kon Melamud CTO

SQL Server 2025 Upgrade: Developer Productivity and AI Cost Savings

SQL Server 2025 includes Copilot integration in SQL Server Management Studio (SSMS), which has productivity implications for development teams and increases developer efficiency.

SQL Server 2025 enables semantic/AI-powered similarity search directly in the database using a new VECTOR data type and the VECTOR_DISTANCE function. Note: Advanced vector indexing (DiskANN) is currently in public preview, while exact KNN search is currently GA, so plan accordingly for large-scale production deployments.

While this in itself does not provide a no-code experience for non-technical users, it simplifies development, enabling your end users to have better search capabilities with semantic search while letting you ship smarter features without buying and running a separate vector database, keeping both CapEx and OpEx in check. This can open the door for end users to use descriptive searches with SQL Server to filter and dig into your data in ways never before possible. You can leverage native T-SQL filters and semantic search together, giving you the best of both worlds. For example, imagine the benefits to your sales team when they can use semantic search natively within your CRM. They would be more efficient, leading to more deals won, which alone could justify upgrading your entire tech stack.

Finally, on the developer end, the addition of native JSON, while seemingly small, is a big step forward. This simplifies application development and reduces development complexity. That means your application will be built faster, more efficiently, and more reliably. While XML remains fully supported, JSON has become the industry standard for modern APIs and web applications.

Fabric Mirroring: Cut Data Replication Costs with SQL Server 2025

Fabric mirroring reduces the risk of replication errors and data pipeline inconsistencies by automating data loading, so you no longer need a custom-built solution to move your raw data from application databases to your data lake. Eliminating custom pipelines for ingesting your data can save tens of thousands of dollars annually in development and maintenance costs. Not only does this save you time and money, but did you also know that Fabric Mirroring storage is free up to a limit based on the fabric’s capacity? Also, the background Fabric Compute used to replicate your data into Fabric OneLake is free and does not consume capacity.

SQL Server 2025 includes an architectural improvement, the change feed, that simplifies your data replication. Previously, change data capture (CDC) was required, which could be a pain to troubleshoot and manage. Fabric Mirroring makes it easier to move your data from your business-critical application databases to your trusted data foundation (modern data warehouse). The first step, getting raw data reliably into your analytics layer, is where many initiatives fail because problems here snowball quickly. Getting data into a place where it can be transformed and analyzed is paramount. You need this step to be quick, reliable, and consistent with the data changes in your application, without impacting the performance of your line-of-business applications.

Currently, Azure Arc is required to set up SQL Server 2025’s change feed feature to replicate data to Fabric in near real-time. Later in this article, we will also explain why Azure Arc is worth using with your on-premises SQL Servers running SQL Server 2025.

SQL Server 2025 Upgrade: Discontinued Features and Migration Risks

Older versions of SQL Server are approaching or have passed the end of support. SQL Server 2014 is already out of extended support (ended July 9th 2024). SQL Server 2016 support ends on July 14, 2026, which is less than six months away. If you’re still running SQL Server 2016 in production, this should be one of your most urgent planning items. If you need help with your SQL Server 2025 upgrade, reach out. We can help! SQL Server 2017’s extended support ends on October 12, 2027.

I would hate to find out you had a data breach because an exploit was found in an old, unsupported version of SQL Server you were using in production.

As with every major release of SQL Server, some features are discontinued. Each one involves financial considerations that could affect your decision to use SQL Server 2025. Data Quality Services (DQS), Master Data Services (MDS), Synapse Link, Machine Learning Services, and SSRS (replaced by Power BI Report Server).

SQL Server Reporting Services (SSRS) is being replaced by Power BI Report Server (PBIRS)

Simply put: Power BI Report Server is basically “SSRS plus Power BI,” with a more modern portal that lets you run both paginated RDL reports and on-prem Power BI (.pbix) reports. Power BI Report Server still is an on-prem report server that uses the SSRS engine under the covers.

Synapse Link is replaced by Fabric Mirroring.

No need for Synapse Link when we can utilize the newly added change feed to replicate your application data to Fabric.

Machine Learning Services (Python and R) packages are being removed from SQL Server 2025

From a financial perspective, Machine Learning Services (Python and R) never made much sense to me, so I am not surprised it is being discontinued. I don’t think it ever made financial sense to use your expensive SQL Server cores for running Python or R when you could have done so on a separate server.

Data Quality Services (DQS) and Master Data Services (MDS) are being removed

Microsoft is clearly shifting away from in-box, server-bound data quality/master data tooling towards cloud-first governance and MDM patterns. Both products were stagnant and niche in adoption. MDS has not seen meaningful feature investment in years, and it is no longer viable in the modern cloud stack. Microsoft’s strategic direction for data governance, catalog, and master data management is now centered on Azure Purview/Microsoft Purview, Microsoft Fabric, and partner MDM solutions rather than SQL Server-hosted services.

The Verdict: Is the SQL Server 2025 Upgrade Worth It?

The bottom line financial case for upgrading to SQL Server 2025 is strong.

SQL Server 2025 is a major step forward in many ways. It’s more secure and reliable, and offers companies much greater flexibility through changes to the Express, Standard, and Developer editions.

Whether you are new to SQL Server or want to upgrade your on-prem, hybrid, or cloud SQL Server databases, ProcureSQL can help. Schedule a free assessment to ensure you’re getting the most return on your investment in using SQL Server or Azure SQL Databases.